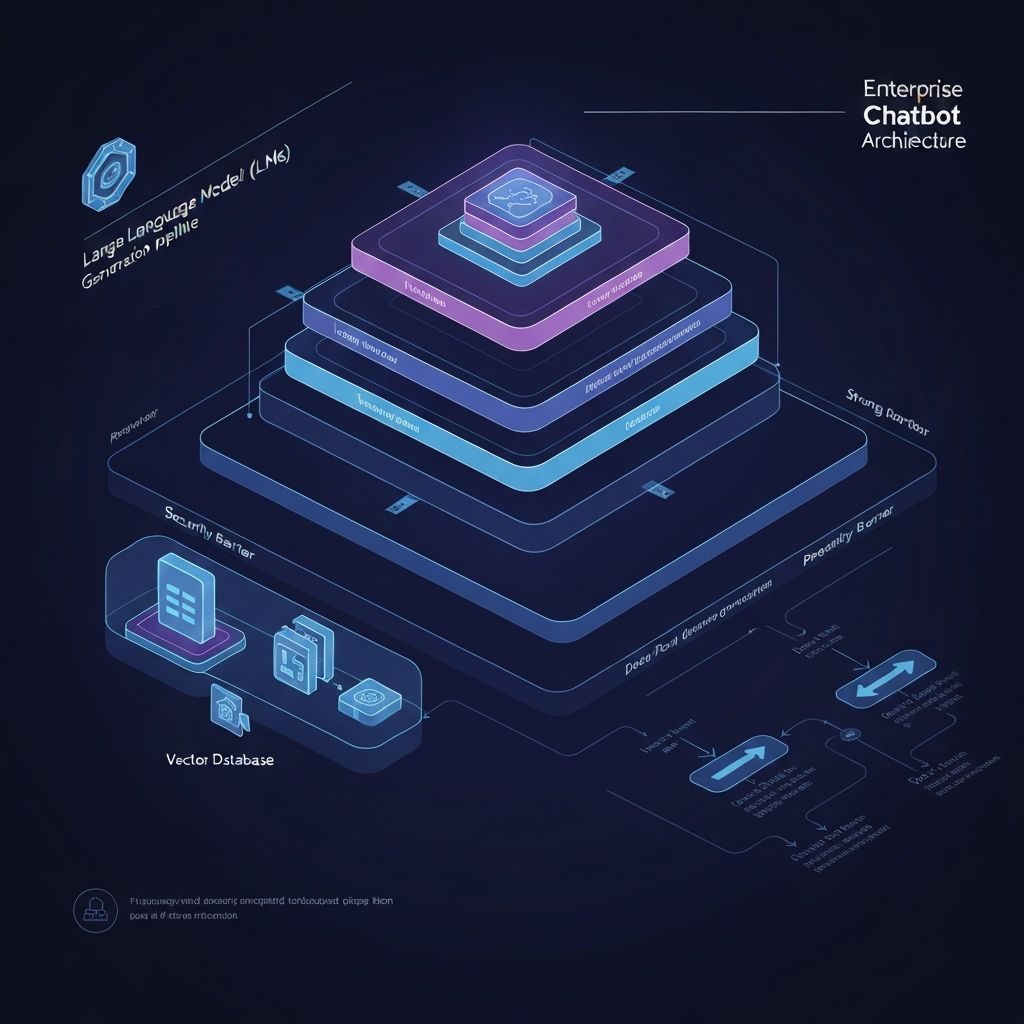

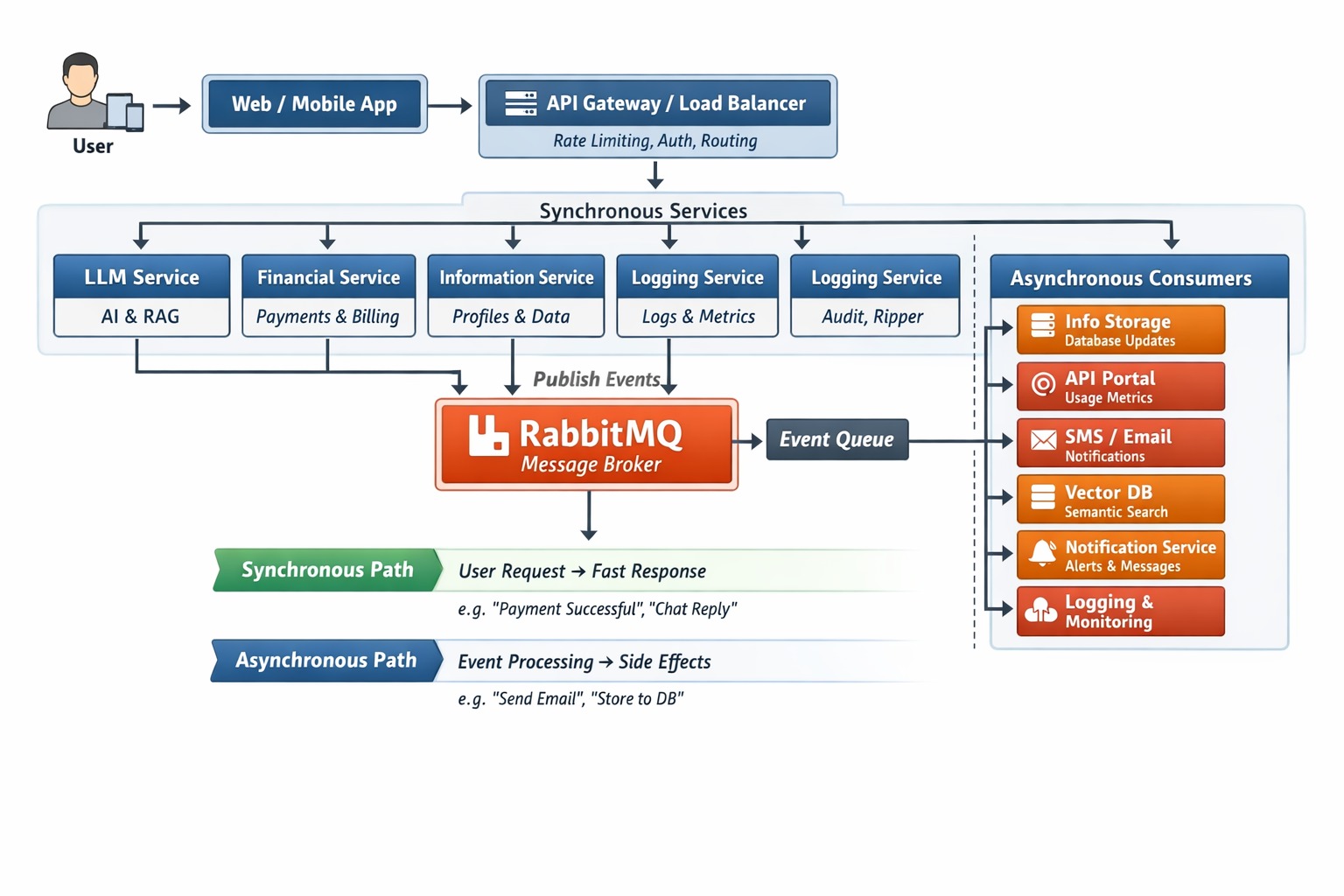

LLM-Integrated Conversational AI Platform with RAG Architecture

• Single-layer chatbot logic failed on complex intent, context carryover, and transactional safety. • Built a confidence-gated AI stack with deterministic routing, NLP layers, RAG grounding, and bounded LLM use. • Improved first-response intent accuracy by 45-60% and reduced hard-failure paths by 80%+.

Executive Snapshot

RoleSystems Architect & Lead Fullstack Engineer

DurationEnterprise Production System

TeamSystems Architect + Cross-functional Bot & Ops Support

Hosting:AWS LambdaDigitalOcean Droplet

CI/CD & Infra:GitHub ActionsDocker

Backend:FastAPI (RAG Orchestration)Node.js (Webhook Router)PHP (Commerce & Payment APIs)

Frontend:React.js

Databases:Redis (Session & Cache)MongoDB (Conversation Logs)MySQL (Commerce Data)Qdrant (Vector DB)

AI Stack:Lavoisier Deterministic LookupWit.aiLlamaOpenAI GPTLangChainPyTorch (Sentiment)RAG Pipeline

Analytics & Security:Looker StudioCloudflare Turnstile

Designing a confidence-gated, multi-model AI architecture that balances deterministic logic, NLP, and LLM inference while enforcing strict data governance and transactional integrity.

Features at a Glance

Problems It Solved

Business Impacts

Engineering Challenges

Gallery

Note: This is a conceptual representation of an enterprise revenue governance platform. All branding, data, and identifiers have been modified for confidentiality purposes.

Project Screenshots

Loading Asset...

1 / 5

Continue Exploring

Geo-Validated Workforce Governance Platform

View Next Case Study